This blog is based on Jong-han Kim's Linear Algebra

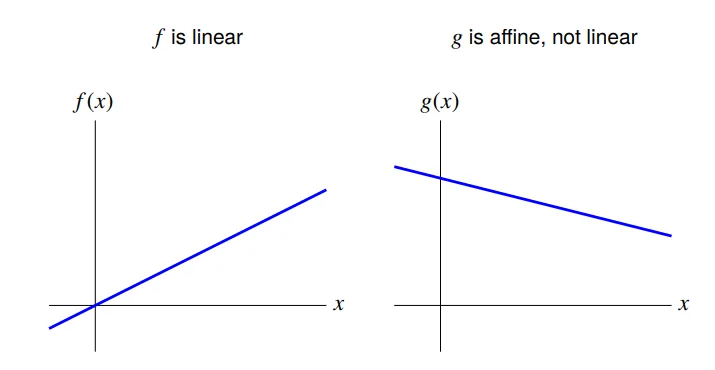

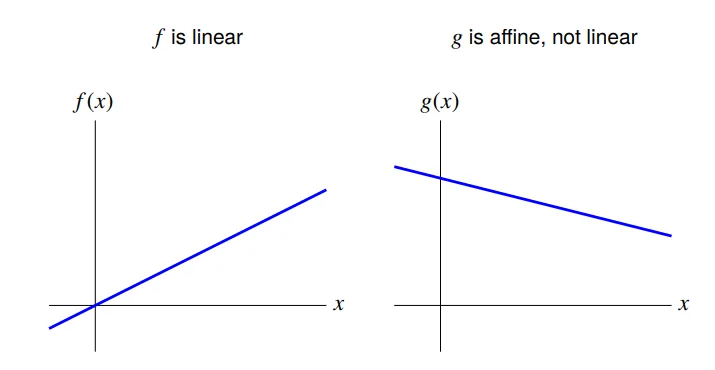

Superposition and linear functions

f:Rn→R

f satisfies the superposition property if

f(αx+βy)=αf(x)+βf(y)

- A function that satisfies superposition is called

linear

The inner product function

With a an n-vector, the function

f(x)=aTx=a1x1+a2x2+⋯+anxn

is the inner product function.

The inner product function is linear

f(αx+βy)=aT(αx+βy)=aT(αx)+aT(βy)=α(aTx)+β(aTy)=αf(x)+βf(y)

All linear functions are inner products

suppose f:Rn→R is linear

then it can be expressed as f(x)=aTx for some a

specifically: ai=f(ei)

follows from

f(x)=f(x1e1+x2e2+⋯+xnen)=x1f(e1)+x2f(e2)+⋯+xnf(en)

Affine functions

A function that is linear plus a constant is called affine.

General form is f(x)=aTx+b, with a an n-vector and b a scalar

a function f:Rn→R is affine if and only if

f(αx+βy)=αf(x)+βf(y)

holds for all α,β with α+β=1, and all n-vectors x,y

First-order Taylor approximation

suppose f:Rn→R

first-order Taylor approximation of f, near point z:

f^(x)=f(z)+∂x1∂f(z)(x1−z1)+⋯+∂xn∂f(z)(xn−zn)

f^(x) is very close to f(x) when xi are all near zi

f^ is an affine function of x

can write using inner product as

f^(x)=f(z)+∇f(z)T(x−z)

where n-vector ∇f(z) is the gradient of f at z,

∇f(z)=(∂x1∂f(z),…,∂xn∂f(z))

Regression Model

regression model is (the affine function of x)

y^=xTβ+ν

- x is a feature vector; its elements xi are called regressors

- n-vector β is the weight vector

- scalar ν is the offset

- scalar y^ is the prediction